Deep Research / Ancient Mysteries / Machine Learning

AI Ancient Scripts: 3 Reasons AI Still Can’t Decode Ancient Languages

We engineered networks to map the cosmos and simulate human thought. But hand the most advanced software on Earth a crumbling, 600-year-old book… and it fails on the very first page.

AI ancient scripts remain one of the biggest unsolved problems in modern technology. It’s tempting to look around today and assume humanity has reached its intellectual peak.

We tend to judge brilliance by raw speed. Faster processors. Snappier algorithms. Every time we dig up lost civilizations that were far more advanced than we believed, we nod respectfully at their primitive engineering. But their writing?

It remains an absolute brick wall.

At first, this just sounds like a quirky archaeology problem. You’d think throwing a few extra server farms at the issue would solve it, right? But the deeper you dig, the weirder the situation becomes. This isn’t merely a translation glitch. It highlights a massive blind spot in how we actually define ‘smart.’

Table of Contents

Why AI Fails at Ancient Scripts (And It’s Not Hardware)

If you ask a tech enthusiast, they’ll probably tell you we just need more computing power to crack these dead languages. That couldn’t be further from the truth. The reality is, why AI fails at ancient scripts is not about hardware.

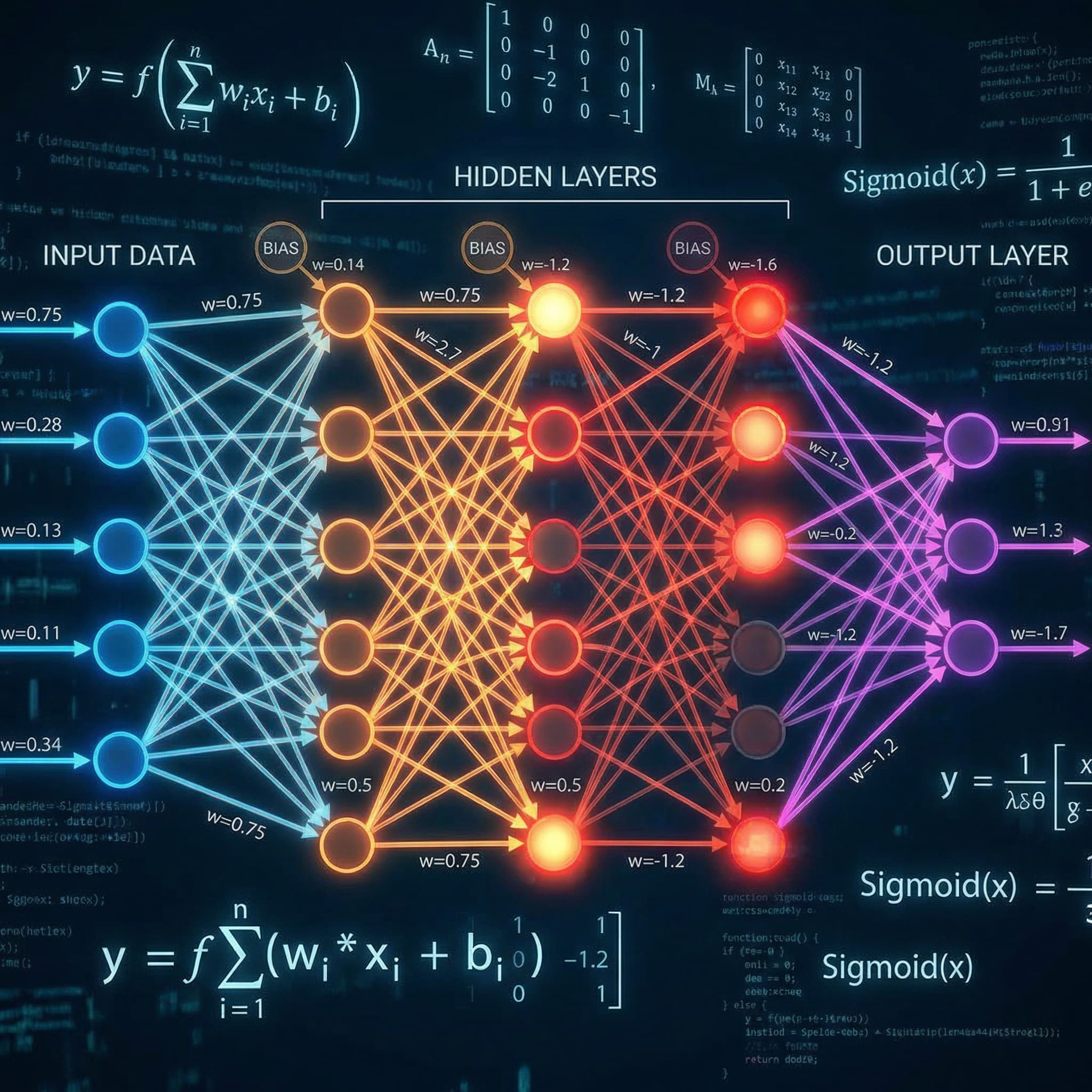

As noted in various studies, including MIT CSAIL research, the flaw is baked into the architecture itself. It comes down to three hard barriers that no amount of silicon can brute-force.

1. The “Small Data” Starvation

A weathered manuscript might contain 35,000 words. To you and me, that’s a decent-sized novella. To a language model trained on trillions of web pages? It’s microscopic.

Machine learning desperately needs vast, repetitive examples to figure out grammar rules organically. Even with forgotten ancient technologies occasionally surfacing, we simply don’t possess enough surviving text to feed the engine.

2. The “Tweet Problem” of Antiquity

This triggers a deeply frustrating technical hurdle. Because leftover inscriptions are roughly the length of a short text message, they carry zero syntactic context.

Translation software relies heavily on watching how a word behaves at the beginning, middle, and end of a long sentence. Without sturdy paragraphs, there is no structural blueprint to map. Just scattered nouns hanging in a void. Ultimately, the problem with AI ancient scripts is lack of context.

3. The Cultural Empathy Gap

This is the barrier software engineers consistently overlook.

An algorithm is essentially a steroid-injected pattern matcher. It has absolutely no grasp of human intent. It doesn’t care what a person living in 1420 meant when they scratched a weird curve into dried animal skin.

Does that shape represent a vowel? A tax record? A sacred ritual? The software can’t guess. It’s never felt the chill of winter, worshipped a sun god, or kept a dangerous secret.

The Three Scripts That Broke the Machine

These aren’t blurry, half-destroyed fragments pulled from a muddy trench. They are substantial, well-preserved texts. And they have publicly humiliated every cryptographic tool we’ve thrown at them. AI ancient scripts are extremely difficult to decode even when the text is pristine.

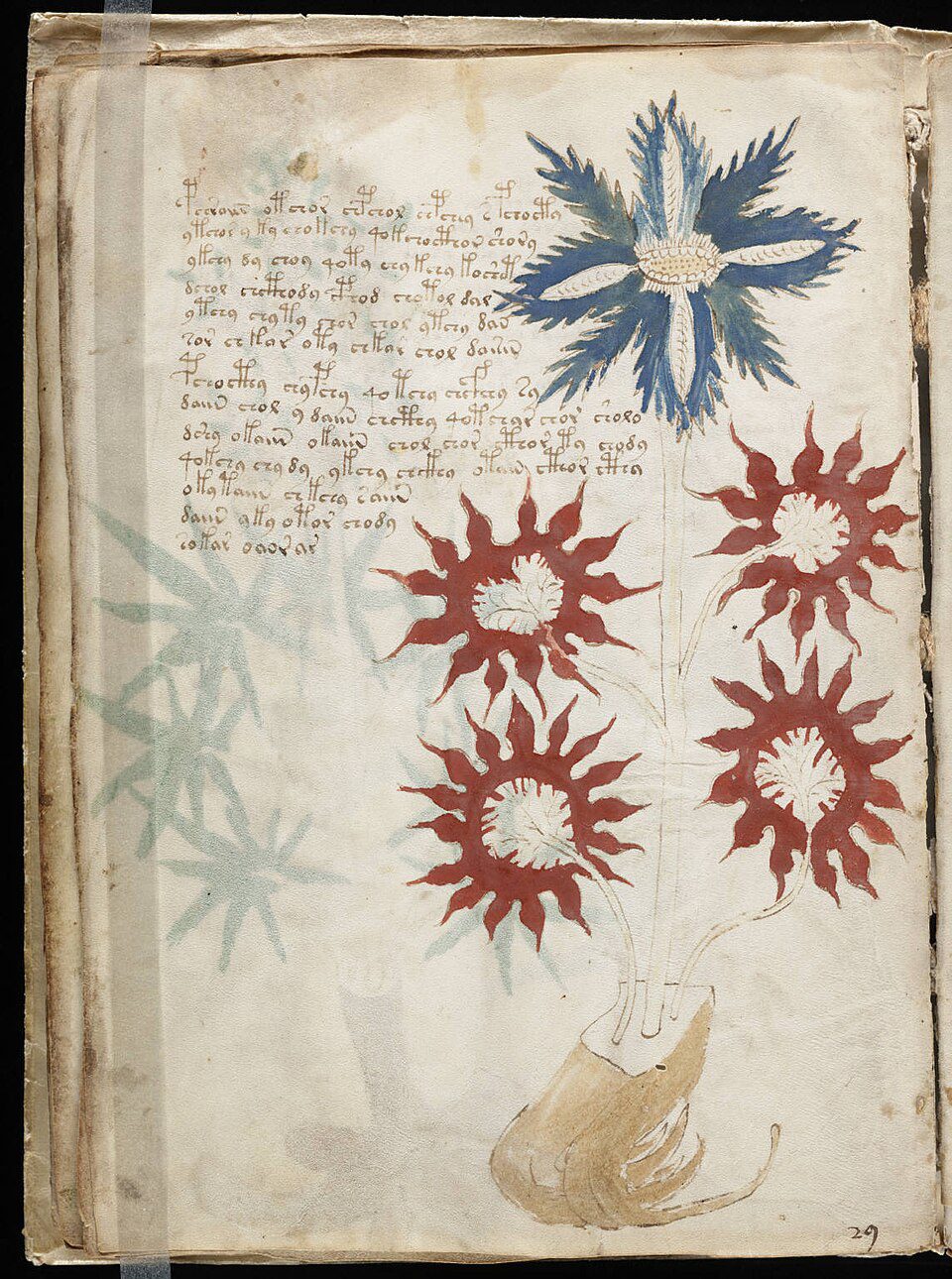

The Voynich Manuscript

Imagine 240 pages of elegant handwriting sitting next to sketches of flora that simply do not exist on this planet. Computational linguistics studies show that without parallel texts, decoding remains statistically unstable.

Why it matters: It proves that without an anchor, AI cannot distinguish between a complex language and an elaborate, centuries-old prank.

The Rohonc Codex

At 448 pages, the issue here isn’t a lack of material—it’s the sheer mathematics of it. Normal alphabets settle around 26 to 40 characters. This beast contains nearly 800 distinct symbols, which completely ruins statistical modeling.

Why it matters: It exposes the limits of statistical probability. When the math breaks, the machine goes blind.

The Indus Script

The silent voice of the Indus Valley civilization. We have over 4,000 physical artifacts stamped with these marks. The catch? Almost every single one is only four or five characters long.

Why it matters: It highlights the “Tweet Problem”—showing that without deep context and syntax, data points remain just data points.

But Wait… Didn’t AI Just Read the Vesuvius Scrolls?

A couple of years ago, the internet went wild. Scientists successfully used machine learning to read charred papyrus scrolls dug out of the ash of Mount Vesuvius.

The Vesuvius Challenge was pitched as the ultimate victory for code-breaking tech.

Here’s the detail everyone glossed over. The software could read those crispy scrolls because the underlying text was ancient Greek. We already knew the language. We had the dictionary.

The obstacle was merely visual: spotting warped letters hidden inside 3D-scanned carbon. Point that exact same sophisticated tech at the Voynich, and it hits a wall. There is no known alphabet to anchor against. Just shapes staring back in complete silence.

The AI Failure Matrix

To put it bluntly, each of these ancient texts breaks our modern tools in a completely unique way.

| Subject | Primary AI Barrier | Current Status |

|---|---|---|

| Voynich Manuscript | No confirmed alphabet. The “Zero Anchor” Problem. | Undeciphered |

| Rohonc Codex | ~800 unique symbols. Destroys frequency analysis. | Unsolved |

| Indus Script | Inscriptions are 3–7 symbols long. The “Tweet Problem.” | Fragmented |

| Vesuvius Scrolls | None. Language was known ancient Greek. | Visual Only |

Could AI Ever Solve These Codes?

Will a neural network ever crack the Voynich Manuscript or the Indus Script? Yes. But not alone.

We need a bridge. A digital Rosetta Stone. Future decipherment won’t be a solo victory for artificial intelligence. It will be a hybrid operation. A human historian must frame the cultural boundaries, feeding highly specific, localized parameters into an AI that handles the statistical heavy lifting at speeds we simply can’t match.

AI is the engine. But humans must lay the tracks.

Why the Human Brain Still Wins

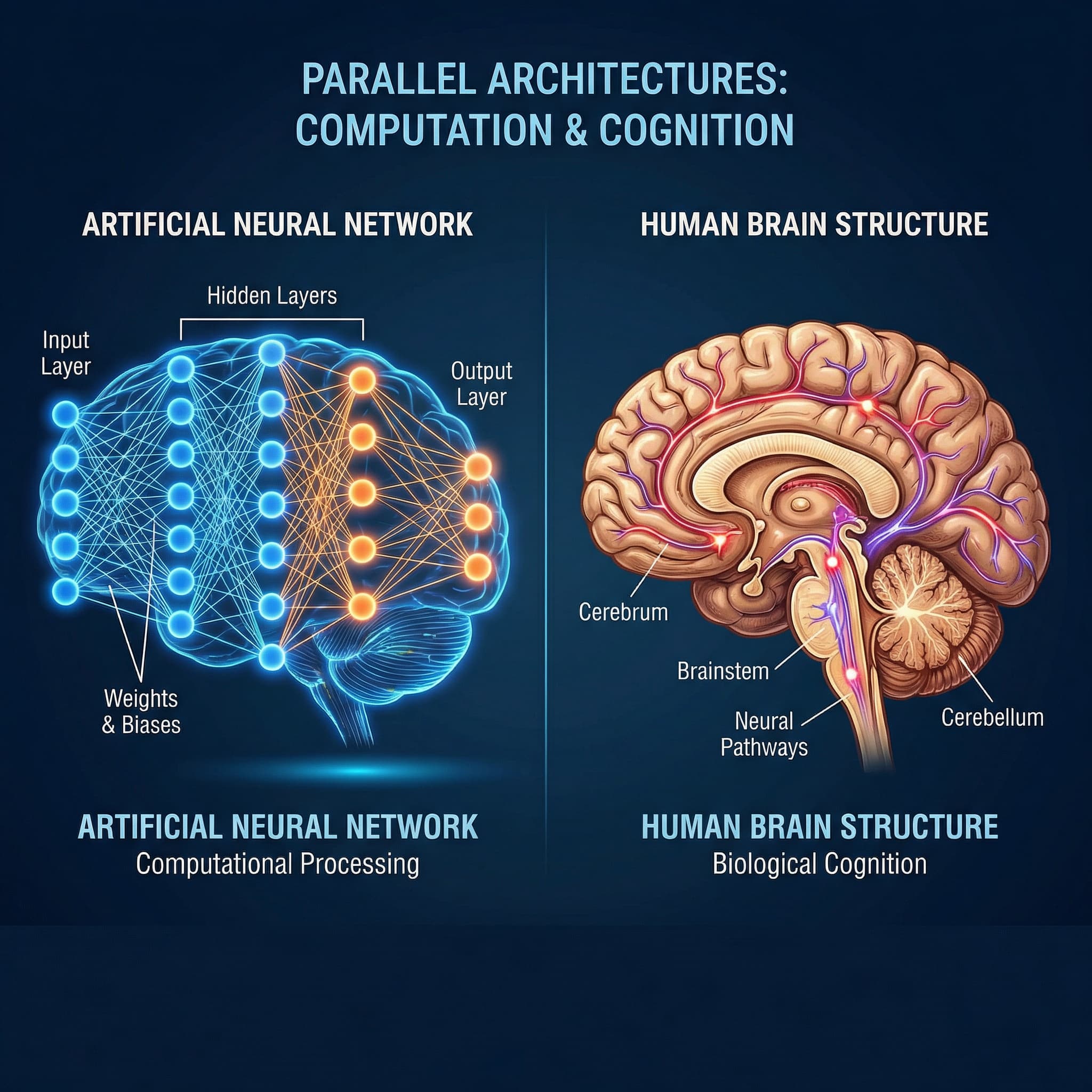

Computers are built to spot trends. Humans are wired to seek meaning.

And meaning isn’t just an optional plugin you can staple onto a dataset after the fact. A neural network can map the statistical distribution of the Rohonc Codex with chilling precision. It can cluster the data, flip it, and cross-reference it endlessly.

But true comprehension requires a leap of empathy. It requires a living mind capable of looking at a strange manuscript and asking, “Why would a real person spend years of their life writing this?”

Until we build a machine that fears mortality, experiences awe, or understands the primal need to keep a secret, true interpretation will remain an exclusively human trait.

The Double-Edged Age of Intelligence

This paradox perfectly captures the weird, fragile era we’re living in right now. We’re splitting atoms and actively boiling our own oceans at the exact same time.

The future isn’t a fixed destiny written in code. It depends entirely on what we choose to prioritize. Much like the durability of Roman concrete remained a baffling mystery for 1,700 years until we finally asked the right chemical questions, these ancient scripts will eventually yield.

We are desperately searching the stars for alien intelligence while completely failing to understand our own history. Among all the historical mysteries we keep getting wrong, this one delivers the sharpest wake-up call.

We don’t just need faster processors; we need a better understanding of what makes us human. Until a machine can comprehend the fear, awe, and necessity that drives a person to put ink to parchment, ancient history will remain our most unbreakable code.

Feeling curious? If you enjoy seeing where human history breaks down, I’ve exposed another bizarre historical puzzle right here: The 10 Most Cursed Objects in History (And What Happened to Their Owners).